Alexander

Alexander

Pony version which supports Python 3.8 is already on GitHub, I plan to make official release at Monday

М

М

Hello! Is there a way to define Database() object after creating of models? I mean, model class should be derivated from db.Entity. Is it the only way?

М

М

To different databases, I mean. That could work simultaniously. But all databases have the same model.

М

М

Créat an entity_factory with database as agument

You mean, db object, as input, and model classes, as output, right?

М

М

Ok. I thought about it too, but I didn't really liked it, because that way I cant keep clean and simple model file. But if it's the only way, ok.

М

М

There is also a way to change base classes in runtime: https://stackoverflow.com/questions/9539052/how-to-dynamically-change-base-class-of-instances-at-runtime

but it's so hacky...

Alexander

Alexander

@mihart00 you don't need to return models. All entities are accessible from db.entities and via name db.Foo.

М

М

@mihart00 you don't need to return models. All entities are accessible from db.entities and via name db.Foo.

Yea, thanks, I missed that. But my main problem with that approach still stands: I should use all that nasty type() stuff on model creation.

М

М

There is also a way to change base classes in runtime: https://stackoverflow.com/questions/9539052/how-to-dynamically-change-base-class-of-instances-at-runtime

but it's so hacky...

If anyone interested, this is not working with my situation.

М

М

I just don't like, how it looks ^_^. Plus I can't copy-paste such model to project with simplier requirements + I want other people to work with that model file too, and i don't want them to have problems with that.

Valentin

Valentin

I personally don't like it bc of additional indentation and lack of type and field hinting.

Alexander

Alexander

Like your

class Foo(db.Entity):

attr1 = Required(int)

attr2 = Optional(str)

Becomes

def define_entity(db):

class Foo(db.Entity):

attr1 = Required(int)

attr2 = Optional(str)

М

М

Vitaliy

Vitaliy

Alexander, is there a simple way to do bulk insert/update using pony? My DB is constantly growing and now some queries take up to 3-6 seconds. I would not worry about this because these are periodic background task, but somehow whole my app freezes while these queries is being executed, even if I update/insert to tables which are not interlinked.

Marco

Marco

Hi, I have an entity defined in pony which is named "User".

If I connect to the PostgreSQL (I want to execute some PREPARE statements) and I do SELECT * FROM User I have this response:

postgres=# SELECT * FROM User;

user

----------

postgres

(1 row)

postgres=#

Marco

Marco

But if I list the tables I see it

postgres=# \dt

List of relations

Schema | Name | Type | Owner

--------+-------+-------+----------

public | xxxxx | table | postgres

public | yyyyy | table | postgres

public | user | table | postgres

(3 rows)

postgres=#

Lucky

Lucky

Hi, I have an entity defined in pony which is named "User".

If I connect to the PostgreSQL (I want to execute some PREPARE statements) and I do SELECT * FROM User I have this response:

postgres=# SELECT * FROM User;

user

----------

postgres

(1 row)

postgres=#

Maybe you are in the wrong database and that sone internal one? Or did you create a user called posgres?

Marco

Marco

Maybe you are in the wrong database and that sone internal one? Or did you create a user called posgres?

I'm connetected to the db with user='postgres', database='postgres' (i'm using docker compose)

Pony sees all the data

Matthew

Matthew

No, all of this basically fixes, like what you reported on broken bulk delete queries

Fantastic, thank you for your work!

Matthew

Matthew

Alexander, is there a simple way to do bulk insert/update using pony? My DB is constantly growing and now some queries take up to 3-6 seconds. I would not worry about this because these are periodic background task, but somehow whole my app freezes while these queries is being executed, even if I update/insert to tables which are not interlinked.

Can you share the query code, and which database you are using?

Matthew

Matthew

Hi, I have an entity defined in pony which is named "User".

If I connect to the PostgreSQL (I want to execute some PREPARE statements) and I do SELECT * FROM User I have this response:

postgres=# SELECT * FROM User;

user

----------

postgres

(1 row)

postgres=#

Use this query and it will work: select * from "user”;

Vitaliy

Vitaliy

Can you share the query code, and which database you are using?

DB is Postgres. Here is pseudocode:

for s in switches:

response = snmp.query_that_builds_tables_of_ports_and_rx_tx_counters

# but actualy it is calculated deltas (prev data stored in memory, db is not touched)

for port, rx, tx in response:

db.insert(SwitchTraffic._table_,

switch=name,

port=port,

rx=rx,

tx=tx,

timestamp=timestamp,

interval='hour',

classtype='SwitchTraffic'

)

commit()

I have about 10 switch each has about 45 ports. Task runs every minute. But I also have another simultaneous task which collects data from Virtual Servers (also about 450 items):

data = some_api.get_realtime_traffic_data()

uuid_map = some_api.build_mapping_of_server_ids_and_its_uuids()

for uuid, info in counters:

s = uuid_map.get(uuid)

if s:

db.insert(VirtualTraffic._table_,

interval='hour',

server=s,

rx=info['rx'],

tx=info['tx'],

timestamp=timestamp,

classtype='VirtualTraffic'

)

commit()

Both VirtualTraffic and SwitchTraffic derived from Traffic entity.

Vitaliy

Vitaliy

This cause such alerts in log (according pony's local db stats):

[2019-10-24 09:15:08,451] ('LONG SQL', 4901.0, 448, 'INSERT INTO "traffic" ("interval", "server", "rx", "tx", "timestamp", "classtype") VALUES (%(p1)s, %(p2)s, %(p3)s, %(p4)s, %(p5)s, %(p6)s)')

[2019-10-24 09:15:14,684] ('LONG SQL', 2216.33, 403, 'UPDATE "server"\nSET "updated" = %(p1)s, "status" = %(p2)s\nWHERE "id" = %(p3)s')

[2019-10-24 09:15:19,504] ('LONG SQL', 1749.1, 508, 'INSERT INTO "traffic" ("switch", "port", "rx", "tx", "timestamp", "interval", "classtype") VALUES (%(p1)s, %(p2)s, %(p3)s, %(p4)s, %(p5)s, %(p6)s, %(p7)s)')

Matthew

Matthew

I can’t tell easily from how the code was pasted - are you committing once per “cron job” or multiple times?

Vitaliy

Vitaliy

commit is executed after each switch (every ~50 records of ~500 total). In virtual server section commit is unnecessary, because it will be done implicitly on db_session exit. It seems I should use something like db.execute('INSERT INTO TABLE "traffic" (bla,bla, bla) VALUES(%s)' % build_all_data_at_once)

Matthew

Matthew

Try only committing at the end of all of the work, and see how it affects the execution speed

Matthew

Matthew

Then after that I would look at whether appropriate indexes are in place for any select queries

Vitaliy

Vitaliy

I tried to change code in different ways, but the result is +- the same. So I want to ask @metaprogrammer is there an internal API to build SQL for batch insert/update.

Vitaliy

Vitaliy

Indices is set properly and all queries are optimized, all of my selects executes within 20-40 ms regardless amount of data to fetch.

Matthew

Matthew

Have you tried recording all of the inserts to a file, then executing them directly with psql, and seeing how long that takes?

Alexander

Alexander

Alexander, is there a simple way to do bulk insert/update using pony? My DB is constantly growing and now some queries take up to 3-6 seconds. I would not worry about this because these are periodic background task, but somehow whole my app freezes while these queries is being executed, even if I update/insert to tables which are not interlinked.

Hi Vitaliy!

A this moment Pony does not provide API for bulk insert. Internally Pony uses executemany when populating many-to-many tables, but in PostgreSQL executemany does not work faster than plain execute.

In the future we can add support for execute_values:

http://initd.org/psycopg/docs/extras.html#fast-exec

As I can see, currently you use db.insert. The overhead of db.insert over cursor.execute should not bee too big, so I doubt you will get big performance increase from using raw execute.

If a single insert takes five seconds, then problem is not in Pony, but in the database. If you execute raw SQL it still going to take five seconds. So you need to optimize the database somehow.

This is some reasons why a single insert can take so much time:

1) Another transaction lockes the table or one of its indexes, and the insert operation waits until this lock will be released. I think this is the most probable reason. Do you use SELECT FOR UPDATE queries?

2) Table or index work slow because of corruption

https://dba.stackexchange.com/questions/169209/very-slow-inserts

3) Wrong tabe partitioning (not sure if it is actual for single insert performance)

https://dba.stackexchange.com/questions/243657/postgresql-11-slow-insert-performance-when-table-reaches-5-million-records

4) Some other reason caused by PostgreSQL architecture

https://eng.uber.com/mysql-migration/

Vitaliy

Vitaliy

Thank you for detailed answer! I'll learn all of links you gave. But it seems that the problem is not in single insert, but when these inserts goes one by one in amount of 400+. If I will solve this, I share the solution 🙂

Matthew

Matthew

I do over 10 million inserts per day using pony and don’t have performance issues relating to inserts

Alexander

Alexander

Try to write raw SQL by hand (it should not be too complex for a single table with known columns) and use execute_values:

https://hakibenita.com/fast-load-data-python-postgresql

Adam

Adam

Is there a way to use "select" to grab every entry thats in a list? or is it better to loop the list and use "get"?

Alexander

Alexander

By default Python sqlite3 library allows max 999 variables in query. Each id in list passed in variable. You can split list to several lists of size, say, 100 or 500.

In the future we can inline each id value into the query and not use variables, but it should be done carefully in order to avoid SQL injection vulnerablility

Elraro

Elraro

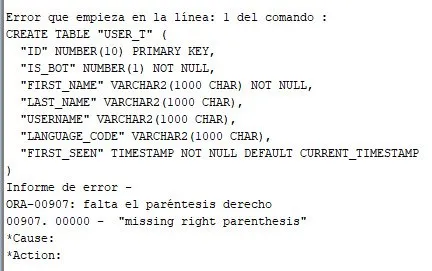

class User_t(db.Entity):

id = PrimaryKey(int)

is_bot = Required(bool)

first_name = Required(str)

last_name = Optional(str)

username = Optional(str)

language_code = Optional(str)

queries = Set('QueryHistory')

results = Set('ResultHistory')

first_seen = Required(datetime.datetime, sql_default='CURRENT_TIMESTAMP')

Henri

Henri

stsouko

stsouko

Christian

Christian