Lucky

Lucky

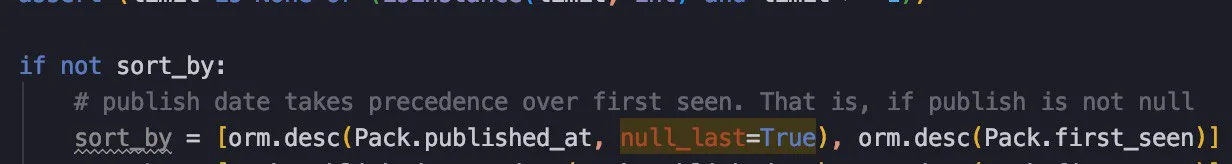

How do I set the posgres NULLS LAST keyword for the order_by clause with orm.desc(Table.column)?

J J

J J

How do I set the posgres NULLS LAST keyword for the order_by clause with orm.desc(Table.column)?

you can combine orm.raw_sql('NULLS LAST') in your order_by()?

Vitaliy

Vitaliy

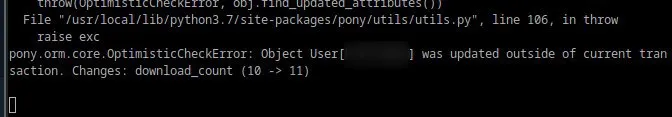

Hi @metaprogrammer! Can you please tell in which cases can this error encounter:

File "/home/xserver/env/py374/lib/python3.7/site-packages/pony/orm/core.py", line 301, in enter

assert local.prefetch_context is None

AssertionError

I have a long chain of events and it is hard to debug. Maybe you can suggest anything.

Vitaliy

Vitaliy

Hi @metaprogrammer! Can you please tell in which cases can this error encounter:

File "/home/xserver/env/py374/lib/python3.7/site-packages/pony/orm/core.py", line 301, in enter

assert local.prefetch_context is None

AssertionError

I have a long chain of events and it is hard to debug. Maybe you can suggest anything.

Turned on sql_debugging and noticed that there was a COMMIT between some queries. Nevermind 🙂

Vitaliy

Vitaliy

Hmm... I have ensured that I never call explicit commit() but I see following in debug info:

...several SELECTs...

SWITCH FROM AUTOCOMMIT TO TRANSACTION MODE

INSERT INTO .............

EXECUTEMANY (1)

INSERT INTO ............

COMMIT

...further SELECTs

Can pony commit transaction implicitly?

Matthew

Matthew

@metaprogrammer did you ever figure out what was causing ProgrammingError: can't adapt type 'TrackedDict’ which I mentioned a few months ago? It has appeared for me again

Matthew

Matthew

before, I think you blamed corrupt database rows? I’m not sure if I am remembering correctly

Vitaliy

Vitaliy

When a db_session scope ends it will commit

Session doesn't ends, db_session wraps whole wsgi application. Moreover, I reproduce it in python shell when pony.MODE is INTERACTIVE

Vitaliy

Vitaliy

I found a workaround. My code fails at this select:

for user in select(w for w in Worker if 'billing' in w.depts.email)

I changed to the following and it's OK:

for user in Dept.get(email='billing').workers

So it looks like a bug, I think.

Matthew

Matthew

Traceback (most recent call last):

File "schedulers.py", line 229, in <module>

schedule(schedulers)

File "schedulers.py", line 199, in schedule

scheduled = scheduler(job_capacity / (len(schedulers) - scheduler_counter))

File "<auto generated wrapper of feedback_alerts_scheduler() function>", line 2, in feedback_alerts_scheduler

File "/root/app/.env/local/lib/python2.7/site-packages/pony/orm/core.py", line 528, in new_func

result = func(*args, **kwargs)

File "schedulers.py", line 141, in feedback_alerts_scheduler

start=1):

File "/root/app/.env/local/lib/python2.7/site-packages/pony/orm/core.py", line 6221, in next

qr._items = qr._query._actual_fetch(qr._limit, qr._offset)

File "/root/app/.env/local/lib/python2.7/site-packages/pony/orm/core.py", line 5771, in _actual_fetch

sql, arguments, attr_offsets, query_key = query._construct_sql_and_arguments(limit, offset)

File "/root/app/.env/local/lib/python2.7/site-packages/pony/orm/core.py", line 5751, in _construct_sql_and_arguments

query._for_update, query._nowait, query._skip_locked)

File "/root/app/.env/local/lib/python2.7/site-packages/pony/orm/sqltranslation.py", line 753, in construct_sql_ast

limit, offset = combine_limit_and_offset(translator.limit, translator.offset, limit, offset)

File "/root/app/.env/local/lib/python2.7/site-packages/pony/orm/sqltranslation.py", line 1143, in combine_limit_and_offset

assert limit2 is None or limit2 >= 0

AssertionError

Alexander

Alexander

So, it seems that limit that you pass to query is negative?

I'll add a check for that with clear error message

Matthew

Matthew

Yes that is correct. I think it was a coincidence that it happened after upgrading pony, or pony is now more strict about negative numbers

Adam

Adam

not having a problem more looking for performance improvement:

i have alot of data and trying to add it to my DB while making sure i dont have duplicates, but it seams to take over 24 hours for adding 6k+ entries

Sigmund

Sigmund

not having a problem more looking for performance improvement:

i have alot of data and trying to add it to my DB while making sure i dont have duplicates, but it seams to take over 24 hours for adding 6k+ entries

24 hours for 6000 records is 14.4 seconds per record. That doesn't seem right! Read here for some info on how to speed up sqlite inserts: https://www.sqlite.org/faq.html#q19

Matthew

Matthew

Is it possible to specify JSONB indexes on postgres from pony, or do they need to be specified as sql?

Matthew

Matthew

create index idx_facebookdatarecord__record_data__impressions on facebookdatarecord ((record_data->>'impressions'));

Alexander

Alexander

Information on google for this is all over the place, would this be a reasonable index?

You mean to add direct support of such indexes to pony?

I think it is a good suggestion, but we need to extend pony API for defining indexes for that. Right now only columns are supported, without ASC/DESC specification, arbitrary expressions or JSONB fields.

I think we need to add migrations first (they are really close). Can you add a new issue about jsonb indexes support?

> I care about whether record_data[‘impressions’] > 0 - to express it in a pythonic way

With index created as specified above PostgreSQL should consider it for this expression

Alexander

Alexander

I it's possible to use greatest command postgresql in a order_by function?

I think it should work already:

query = select(x for x in X if x.a > 10)

query = query.filter(lambda x: x.b < 20)

query = query.order_by(lambda x: max(x.p, x.q))

Pony translate Python max function to SQL MAX or GREATEST depending on context. If you pass several arguments it will be translated to GREATEST(...)

Matteo

Matteo

I think it should work already:

query = select(x for x in X if x.a > 10)

query = query.filter(lambda x: x.b < 20)

query = query.order_by(lambda x: max(x.p, x.q))

Pony translate Python max function to SQL MAX or GREATEST depending on context. If you pass several arguments it will be translated to GREATEST(...)

thanks but with max I have this error

pony.orm.core.ExprEvalError: max([sum(u.score.try_succesful) + sum(u.score.try_not_succesful)], 1) raises NameError: name 'u' is not defined

this is the code (User is a db class)

ranking = select((sum(u.score.score),

float(sum(u.score.try_succesful)) /

(sum(u.score.try_succesful) +

sum(u.score.try_not_succesful)),

u) for u in User).\

order_by(desc("float(sum(u.score.try_succesful))/" +

"max([(sum(u.score.try_succesful)+sum(" +

"u.score.try_not_succesful))],1)")). \

order_by(desc('sum(u.score.score)'))

Matteo

Matteo

FROM "user" "u"

LEFT JOIN "score" "score"

ON "u"."id" = "score"."user"

GROUP BY "u"."id"

ORDER BY coalesce(SUM("score"."score"), 0) DESC, ((coalesce(SUM("score"."try_succesful"), 0))::double precision / greatest((coalesce(SUM("score"."try_succesful"), 0) + coalesce(SUM("score"."try_not_succesful"), 0)), 1)) DESC

this is the sql code translate thank you

Alexander

Alexander

Maybe it is too complex to be worth adding to pony

I think we will eventually add it. We just need to found pythonic API for index creation and implement it. Maybe something like that:

class MyEntity(db.Entity):

a = Required(int)

b = Required(str)

c = Optional(int)

data = Required(Json)

index1 = Index(a, b)

index2 = Index(b, desc(c))

index3 = Index(lambda obj: (obj.b, desc(obj.a + obj.c)))

index4 = Index(lambda obj: obj.data["foo"])

#todo

Matthew

Matthew

I have a query which takes approx 125ms in postgres, but approx 1 second when done through pony. Is has just under 12,000 results, and uses json

Alexander

Alexander

12000 rows is pretty big amount. Is it really necessary to load them all?

In general, creation of objects is slower than just fetching rows from the database, because object has complex internal state and interconnected with related objects.

Speaking about Json field, when Pony unpacks jsonb from the database to Python structures, it then replaces dicts and lists to special subclasses TrackedDict and TrackedList which track changes and notify objects that need to be saved to the database if json content was modified.

So, it is not surprising if loading objects is 8 times slower than fetching raw data from database connection. Maybe we can optimize it a bit, but I don't think the improvement will be solid

Matthew

Matthew

Unfortunately I do need to load them all, maybe I should do a raw query without creating pony objects then?

Matthew

Matthew

@metaprogrammer you were right, I didn’t need to load all of that data, a lot of it was redundant

Alexander

Alexander

At this moment you need to add field manually, by executing ALTER TABLE sql command, for example in PgAdmin interface. What is your attribute definition?

Alexander

Alexander

Yes, but for strings we already have empty value - it's empty string. It is not necessary to have two different empty values at the same time

Marco

Marco

Marco

Marco

Sorry, but pony 0.7.10 requires Python of one of the following versions: 2.7, 3.3-3.7. You have version 3.8.0

Please fix this ASAP