BinaryByter

BinaryByter

Usually for deep learning you give something like 3 or 4

Well yea, but even with 12 the nan shouldnt occur.

Besides with layers like 5000-200-500 it occurs as well

Mat

Mat

Well yea, but even with 12 the nan shouldnt occur.

Besides with layers like 5000-200-500 it occurs as well

5k 200 500 are the number of neurons for each layer?

Defragmented

Defragmented

But it shouldnt output 'not a number', should it?

"Or rather: when solving for exp (x) = 0 , x is undefined"

do you have access to an activation function\backprop functon?

if yes - add a condition, if nan - stop and dump the whole NN

i had such a problem, but in my case it was because i used a bad activation function and didnt normilize into 0...1 and the value expoded

BinaryByter

BinaryByter

Defragmented

Defragmented

thats good, then you can add a stop and save condition to activation/backprop functions

BinaryByter

BinaryByter

when you will have a dump you will ser where it happened and analyze nearby neurons

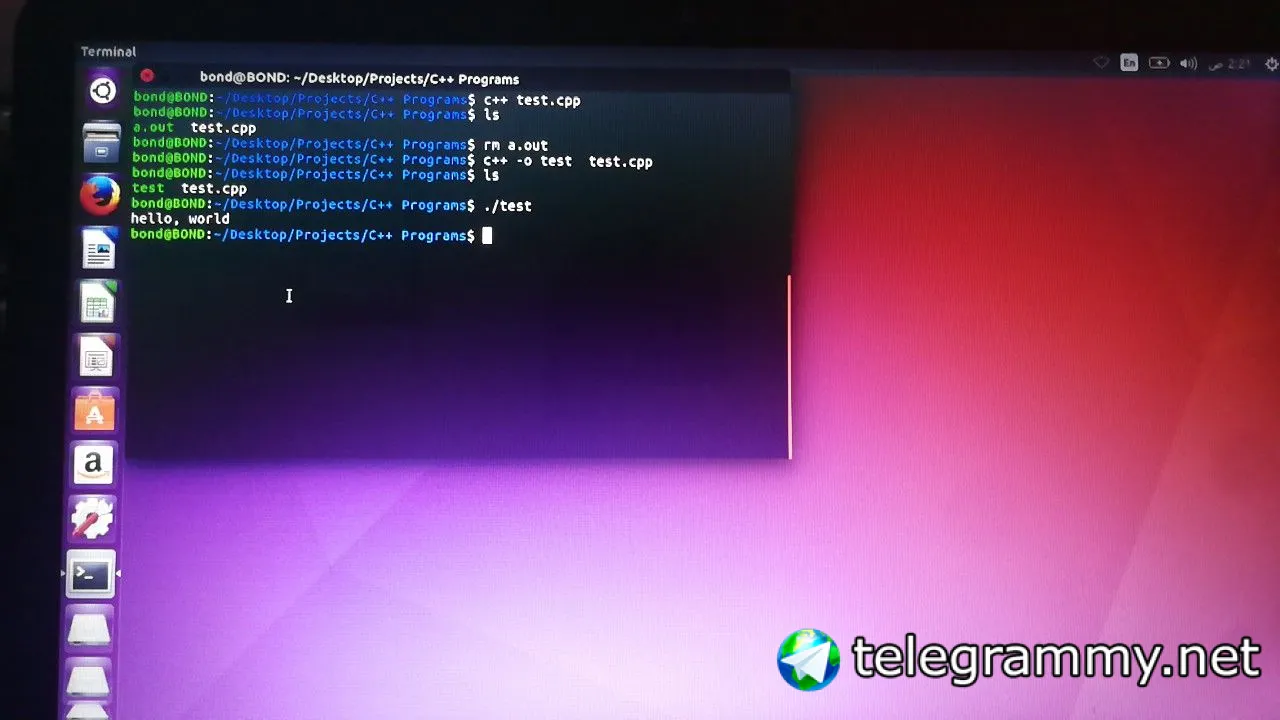

It happens in the function "calculateDerivatives"

Defragmented

Defragmented

okay. and error is in second layer?

did you check that all the links where your neurons read from lead to a correct neurons in precious layer?

BinaryByter

BinaryByter

BinaryByter

BinaryByter

you cant stop it if you dont know what leads to it

A derivative gets calculated and becomes nan

BinaryByter

BinaryByter

whats the input data to derivative when it procudes nan?

The derivative doesnt calculate over input data

Defragmented

Defragmented

Im tired ill check tomorrow in front of a pc again

anyway. if (x==0){x=0.00001} might be as a last resort

Defragmented

Defragmented

I have no division that takes the derivative as dividend

detivative is a difference in real signal and prefered signal, right?

BinaryByter

BinaryByter

detivative is a difference in real signal and prefered signal, right?

Its the DError/DWeight

Kelvin

Kelvin

klimi

klimi

MᏫᎻᎯᎷᎷᎬᎠ

MᏫᎻᎯᎷᎷᎬᎠ

Dima

Dima