Dumb

Dumb

The problem shows up whenever you're trying to read the char value

Maybe you have to choose another way to save it

Nils

Nils

How can we use graphics.h header file in vs code

You don't since graphics.h is not a part of Windows NT. It's part of DOS tho.

Jagadeesh

Jagadeesh

1. What is the output of the following printf

printf("%d",34+24/12%3*5/2-4); answer with explanation

Nils

Nils

klimi

klimi

1. What is the output of the following printf

printf("%d",34+24/12%3*5/2-4); answer with explanation

test.c: In function ‘main’:

test.c:5:3: error: unknown type name ‘answer’

5 | answer with explanation return 0;

| ^~~~~~

test.c:5:15: error: expected ‘=’, ‘,’, ‘;’, ‘asm’ or ‘attribute’ before ‘explanation’

5 | answer with explanation return 0;

| ^

Ammar

Ammar

Anunay

Anunay

1. What is the output of the following printf

printf("%d",34+24/12%3*5/2-4); answer with explanation

https://en.cppreference.com/w/c/language/operator_precedence

Anunay

Anunay

it's kinda useless because my exam got over

Sure, knowledge is completely useless after the exam

Anonymous

Anonymous

Sure, knowledge is completely useless after the exam

Exactly. That's what i've been saying.

Anton

Anton

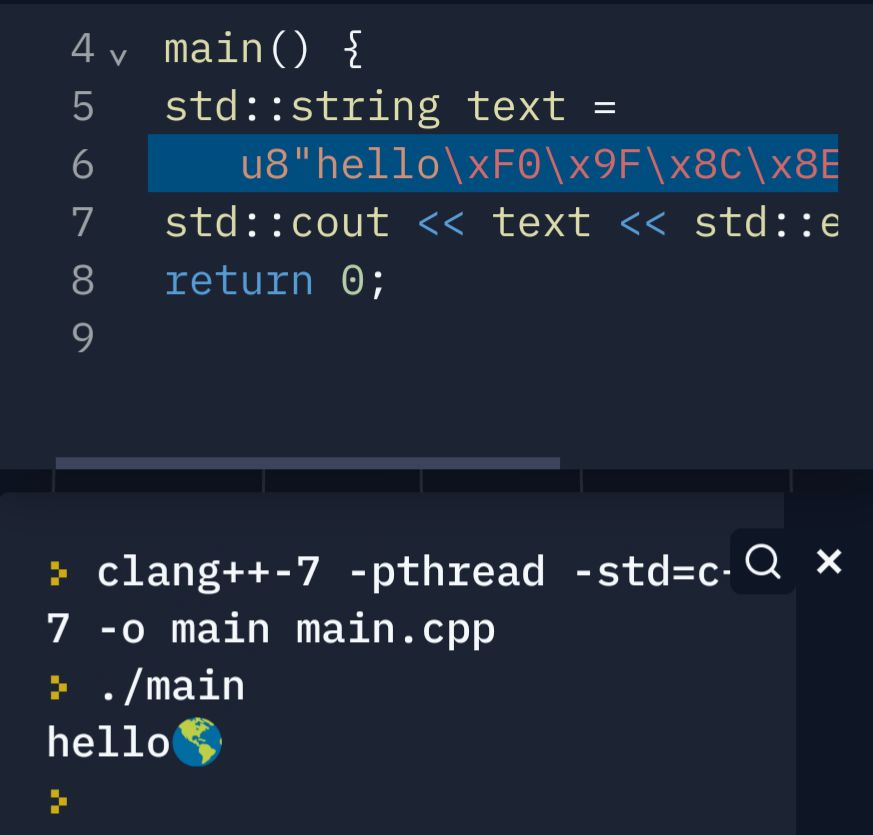

Hi. Is it possible to decode std::string so that universal character names encountered in it will be translated to corresponding symbols? For example "Hello, \\uD83C\\uDF0E.\" will become "Hello, 🌎."

Luca

Luca

hello guys i've in the same dirctory two cpp file (file1.cpp file2.cpp) both of them has an int main method.

I would like to run file1.cpp that have to perform a certain task, but previous than that it has to execute file2.cpp.

I'm make an unix app so is it fine to write something like this in file1.cpp:

pid_t pid = fork();

if ( pid == 0 ){

system("./file2");

}

else{

task1();

}

Anonymous

Anonymous

Anonymous

Anonymous

hello guys i've in the same dirctory two cpp file (file1.cpp file2.cpp) both of them has an int main method.

I would like to run file1.cpp that have to perform a certain task, but previous than that it has to execute file2.cpp.

I'm make an unix app so is it fine to write something like this in file1.cpp:

pid_t pid = fork();

if ( pid == 0 ){

system("./file2");

}

else{

task1();

}

Why are you hell bent on naming all the functions main()? You asked a similar question yesterday if I remember correctly. Why can't you name the function as something else instead of main in file2.cpp and call that from the main function in file1.cpp?

Anonymous

Anonymous

Now, it just prints Hello, \uD83C\uDF0E.

How are you printing it out? And what is the type of your string?

Anonymous

Anonymous

std::cout «

First of all you have to make sure that the code points are valid. \uD83c is an invalid code point in both UTF-8 and UTF-16. So are you sure they are two different code points or is it a UTF-32 code point?

Anton

Anton

First of all you have to make sure that the code points are valid. \uD83c is an invalid code point in both UTF-8 and UTF-16. So are you sure they are two different code points or is it a UTF-32 code point?

Easier example with 2byte symbol 中 (\u4e2d) also does not work. And the example with earth sign I verified on external decoder - it is valid (I can't share link due to chat rules).

Luca

Luca

Why are you hell bent on naming all the functions main()? You asked a similar question yesterday if I remember correctly. Why can't you name the function as something else instead of main in file2.cpp and call that from the main function in file1.cpp?

Cause I’ve to use some function that is compiled with a specific make file and I’m getting some problem by replacing main with a function name

Anshul

Anshul

Sure, knowledge is completely useless after the exam

When did this happen? When did admin change😳

olli

olli

Cause I’ve to use some function that is compiled with a specific make file and I’m getting some problem by replacing main with a function name

Change the makefile?

Spawning a new process all the time is also way more inefficient than just calling another function.

Otherwise you need to create a new process and wait for it to terminate which works different on every platform.

Anonymous

Anonymous

Easier example with 2byte symbol 中 (\u4e2d) also does not work. And the example with earth sign I verified on external decoder - it is valid (I can't share link due to chat rules).

It is not valid in UTF-16...neither in UTF-8 which is why I asked if you are using the right encoding. Here I am not talking about the Unicode standard. I am talking about the C++ standard.

The problem is that the C++ standard specifies that \u accepts only code points and not code units. You are specifying a surrogate pair using \u specification which is specifically forbidden by the C++ standard. Unless you show me how you are encoding the string (meaning you show me the code), there is not much I can tell you.

By default the values in a string are printed out assuming the local machine's encoding. Unless you specify the encoding correctly, the values printed out will not be meaningful. And also your terminal should be able to support the encoding used. Unless all of this happens, you will not be able to see the correct output that you expect.

Luca

Luca

Change the makefile?

Spawning a new process all the time is also way more inefficient than just calling another function.

Otherwise you need to create a new process and wait for it to terminate which works different on every platform.

That was the main problem.

I tried with several change but I wasn’t able to change makefile

Anonymous

Anonymous

Anton

Anton

Anonymous

Anonymous

Thanks, by the way what is that IDE of yours?

It is not an IDE. It is an online compiler

https://replit.com/languages/cpp

数学の恋人

数学の恋人

how can i access a c++ class variable in another c++ file if my file doesn’t have a .h

You include that .cpp file though it's very bad thing to do

Amu

Amu

You include that .cpp file though it's very bad thing to do

i tried that it crashed my whole code

Anonymous

Anonymous

how can i access a c++ class variable in another c++ file if my file doesn’t have a .h

You can't. There is this hack of including the cpp file but that will most likely result in an ODR violation or a circular compilation process.

The C++ compilation process (excluding modules) work by compiling each individual cpp file into a translation unit and then further into an object file. For this process, it requires a class definition to be seen for it to allow you to define variables of that class type.

Anonymous

Anonymous

Amu

Amu

does anyone know how to do fine grained locking? I have a queue of tasks and i need to lock them individually so that multiple threads can work on them at once

Anonymous

Anonymous

does anyone know how to do fine grained locking? I have a queue of tasks and i need to lock them individually so that multiple threads can work on them at once

There are multiple ways you could do this.

1. Use a lock free queue

2. Have a queue but use only one thread to access it. This thread picks off a task one by one and passes it to a thread pool. This same thread will also handle pushing tasks into the queue.

3. Use a promise and a future and store the futures into the queue instead of each individual task itself. The promises can be handled by a thread pool executor service.

Amu

Amu

There are multiple ways you could do this.

1. Use a lock free queue

2. Have a queue but use only one thread to access it. This thread picks off a task one by one and passes it to a thread pool. This same thread will also handle pushing tasks into the queue.

3. Use a promise and a future and store the futures into the queue instead of each individual task itself. The promises can be handled by a thread pool executor service.

I see, say i have items within a queue and a customer wants to buy an item while a supplier wants to modify another item and another supplier wants to change the price of another item, would your tips work in this case as well? (#1 won’t work because i’m required to use locks)

Anonymous

Anonymous

I see, say i have items within a queue and a customer wants to buy an item while a supplier wants to modify another item and another supplier wants to change the price of another item, would your tips work in this case as well? (#1 won’t work because i’m required to use locks)

All 3 would work. If you are required to use locks then 2 and 3 are both eligible though in the case of option 3, the locks are hidden from your code.

Anonymous

Anonymous

Jaime

Jaime

is it possible to put a countdown in a program so that it does a parallel count with no sleep and no threads?

Peace

Peace

how the 'Hello world' is getting printed here : int main(){

string greet("Hello world");

char *char_array = &greet[0];

cout<<char_array<<"\n";// Hello world

return 0;

}

Anton

Anton

Peace

Peace

from char_array which is initialized from greet

char_array is storing address of first element of greet string . Then how ?

Anton

Anton

char_array is storing address of first element of greet string . Then how ?

No, it stores pointer to the string.

Suka

Suka

how the 'Hello world' is getting printed here : int main(){

string greet("Hello world");

char *char_array = &greet[0];

cout<<char_array<<"\n";// Hello world

return 0;

}

perhaps when you passing &great[0] it retrurn the address of great.c_str() ? cmiiw

Peace

Peace

How this both cout prints same output ? char char_array_1[]={'H','e','l','l', 'o',' ', 'W','o','r','l','d','\0'};

cout<<char_array_1<<endl;// Hello world

cout<<&char_array_1[0]<<endl; // Hello world

Khaerul

Khaerul

Peace

Peace

both have the same address

But in second cout statement , we just using first element of char_array_1, then how it is printing the whole char_array_1 ?

Khaerul

Khaerul

But in second cout statement , we just using first element of char_array_1, then how it is printing the whole char_array_1 ?

in C-string, string are actually one-dimensional array of characters terminated by a null character '\0'.

char_array_1 equals to &char_array_1[0]

Captain

Captain

Viprr

Viprr

V01D

V01D

D

D