Mark ☢️

Mark ☢️

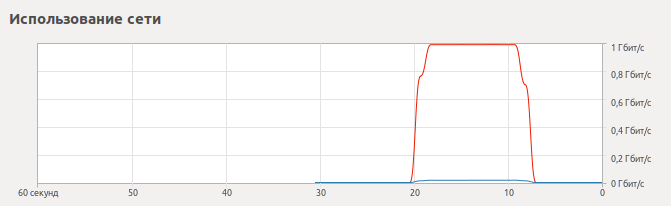

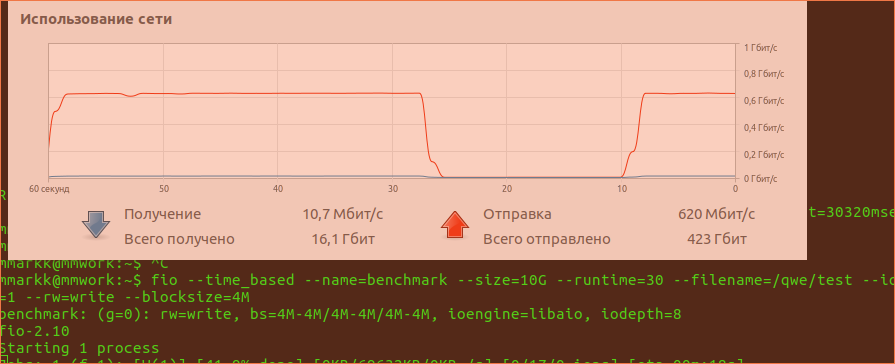

Не похоже. всякие очереди, лоад эвераджи и тд дают дёрганный график. а тут прямо строго горизонтальная линия

Евгений

Евгений

Не похоже. всякие очереди, лоад эвераджи и тд дают дёрганный график. а тут прямо строго горизонтальная линия

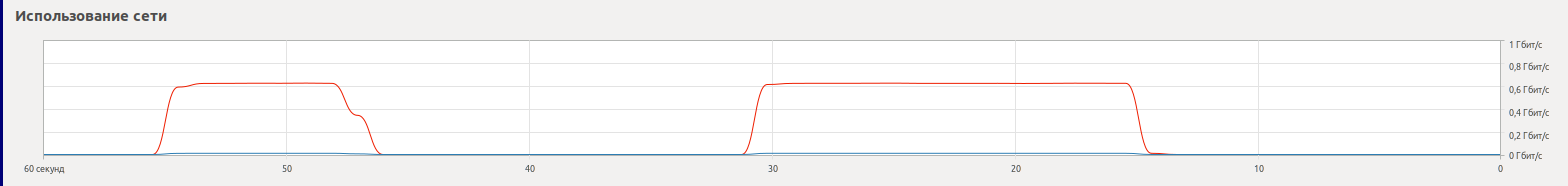

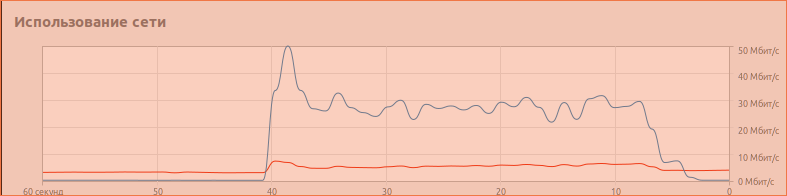

Дальше идут игры с irqbalance и посадка osd при помощи cgroup на одно ядро с прерываниями от сетевухи и контроллера дисков

Дмитрий

Дмитрий

именно, Карл

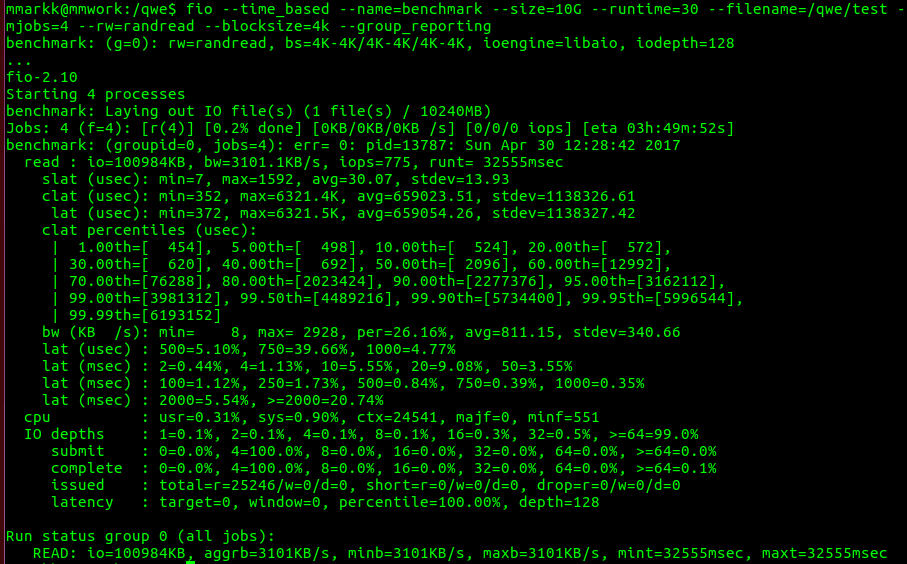

[root@on01 var]# fio —time_based —name=benchmark —size=10G —runtime=30 —filename=/var/lib/one/datastores/110/test —ioengine=libaio —randrepeat=0 —iodepth=128 —direct=1 —invalidate=1 —verify=0 —verify_fatal=0 —numjobs=4 —rw=randread —blocksize=4k —group_reporting

benchmark: (g=0): rw=randread, bs=4K-4K/4K-4K/4K-4K, ioengine=libaio, iodepth=128

...

fio-2.2.8

Starting 4 processes

Jobs: 4 (f=4): [r(4)] [100.0% done] [102.4MB/0KB/0KB /s] [26.3K/0/0 iops] [eta 00m:00s]

benchmark: (groupid=0, jobs=4): err= 0: pid=2689: Sun Apr 30 13:49:26 2017

read : io=2815.7MB, bw=95515KB/s, iops=23878, runt= 30186msec

slat (usec): min=6, max=698, avg=28.68, stdev= 9.47

clat (usec): min=223, max=617275, avg=21406.54, stdev=50069.48

lat (usec): min=250, max=617308, avg=21435.62, stdev=50069.73

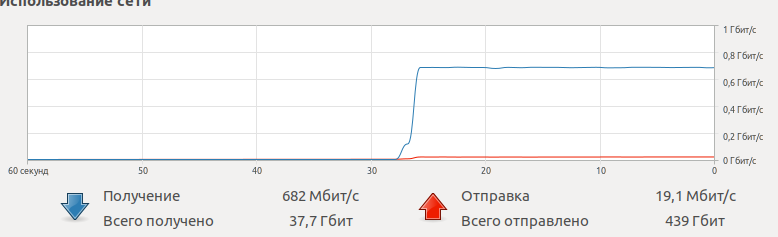

Получаю ~750МБит с Гигабитного клиента

9 SSD на 3 нодах, ceph version 12.0.2, везде centos 7.3, mtu 9000

монтирую так

10.1.16.1:6789:/ /var/lib/one/datastores/110 ceph rw,relatime,name=admin,secret=xxx,nodcache 0 0

на клиенте ceph version 0.94.5, все по умолчанию.

Mark ☢️

Mark ☢️

Дмитрий

Дмитрий

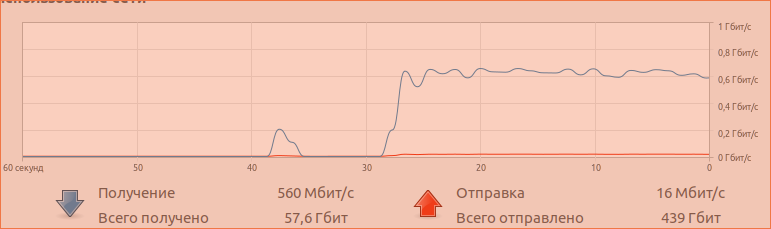

локально на 1 ноде рандом рид

Jobs: 4 (f=4): [r(4)] [100.0% done] [222.2MB/0KB/0KB /s] [56.9K/0/0 iops] [eta 00m:00s]

benchmark: (groupid=0, jobs=4): err= 0: pid=202932: Sun Apr 30 15:20:32 2017

read : io=6591.2MB, bw=224542KB/s, iops=56135, runt= 30058msec

В 3 раза хуже rbd

Mark ☢️

Mark ☢️

Когда-то я говорил что-то там насчёт говённого реалтека. не ведитесь на это. есть намного хуже.

Александр

Александр

Tverd

Tverd